AI is getting a little closer to revolutionizing closed captioning for those with disabilities

Photo illustration by Stacker // Shutterstock

AI is getting a little closer to revolutionizing closed captioning for those with disabilities

Photo illustration with hands holding tablet, with a speech bubble on the screen.

Artificial intelligence has lived rent-free in the minds of Wall Street traders, workers, and even parents with school-aged children over the past year as new tools emerge almost daily.

Many AI developments have sparked resistance and anxiety, dredging up concerns that they could replace well-paying jobs or erode the quality of education. However, some advancements promise to promote equity and accessibility through new technologies, including AI-powered voice-to-text transcription.

accessiBe analyzed data from captioning service 3Play Media to show how error rates in automated closed captioning are declining. This is a promising development for a future in which closed captions might be more efficiently produced and more widely available. The report draws from more than 100 hours of transcription content representing an array of speaking accents and locales and draws on transcriptions in higher education, tech, consumer goods, cinema, sports, and other industries.

For many, closed captions are a helpful tool—and one that an increasing number of Gen Z and millennials prefer when watching videos, according to a 2023 YouGov survey, with respondents saying they enhance their concentration or help them understand thick accents.

Transcribed captions also make videos accessible to the estimated 15.5% of U.S. adults with difficulty hearing, per 2022 National Health Interview Survey data, and Congress requires video programming distributors to include them on TV programs. Transcribing is manual work. For decades, creating captions for live TV and other video content has been the work of the more than 20,000 workers in the closed captioning and court reporters services industry.

AI has fueled the rapid advancement of audio transcription tools in consumer and corporate software. Apple reportedly plans to introduce AI transcription to its voice memos and notes apps in the next update to its iPhone operating system, iOS 18. The update would potentially bring instantaneous transcription to phone apps used by more than 2 billion people. Other companies like Zoom have also added AI-powered features, including AI transcription of video calls.

Several of the most prominent providers of the AI engines behind these services have seen their AI become even more accurate in the last year, according to a recent study by 3Play Media.

![]()

Dom DiFurio // accessiBe

Tools making the most significant improvements in word errors

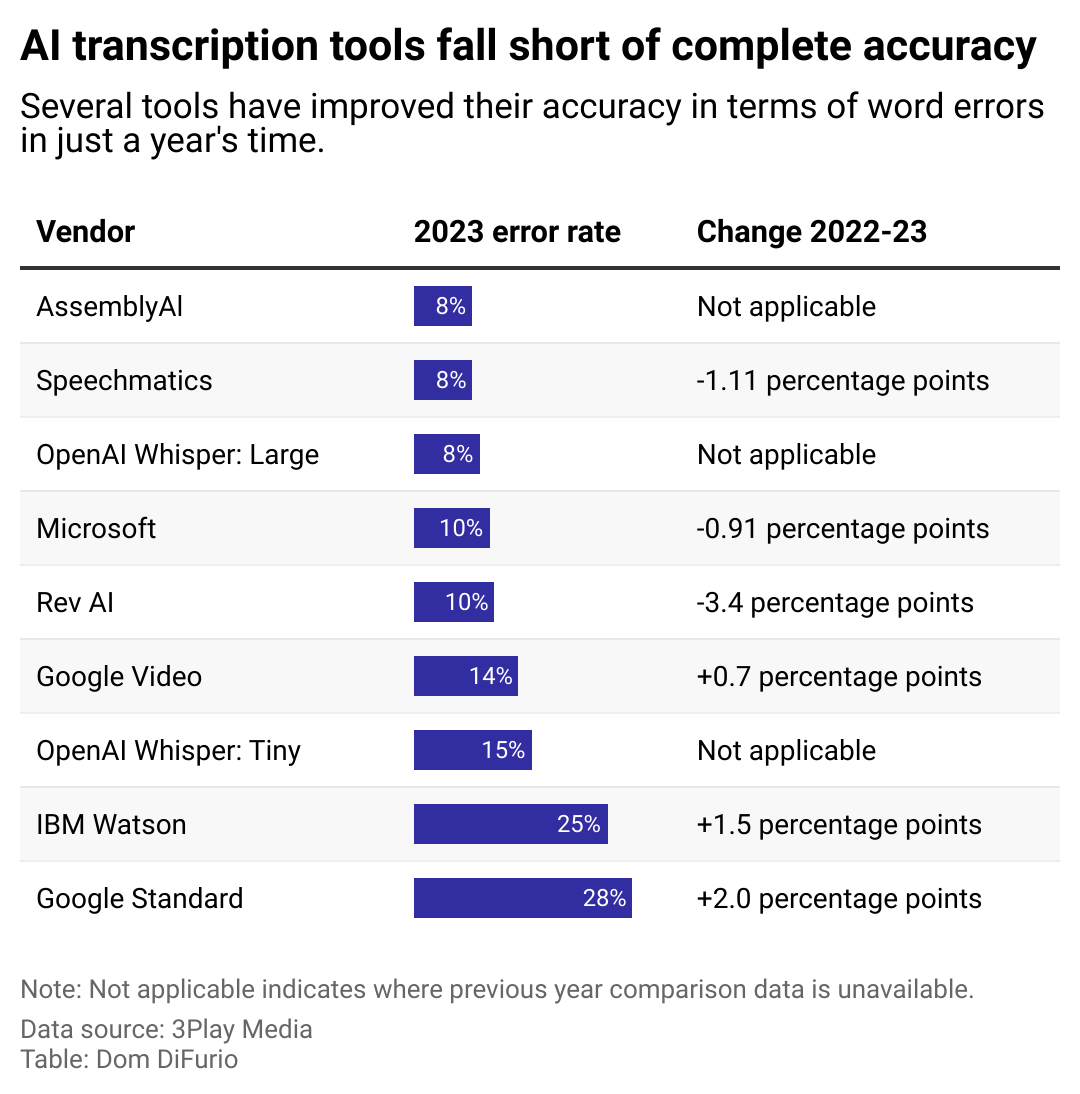

A bar chart showing the percentage error rates for how many times leading AI models will get a word wrong when transcribing audio. AssemblyAI, Speechmatics, and OpenAI have 8% error rates, the lowest among the companies studied. Google and IBM have the highest, at 28% and 25% respectively.

As shown in the chart above tracking word error rates, Google and IBM’s audio transcription AI performed worse in 2023 than in 2022. Google Standard had a 28% error rate while Google Video had a 14% error rate—an increase of 2 percentage points and 0.7 percentage points since 2022, respectively. Meanwhile, IBM Watson had a 25% error rate, a 1.5 percentage point error increase since 2022.

However, other platforms saw slight improvements with lower error rates over the same period, including Rev AI (-3.4 percentage points), Microsoft (-0.91 percentage points), and Speechmatics (-1.11 percentage points). They all had a 10% or less error rate for 2023.

Other transcription tools tracked include Assembly AI (8% error rate), the multilingual OpenAI Whisper: Large (8% error rate), and the English-only OpenAI Whisper: Tiny (15% error rate), all of which didn’t have 2022 data to make a year-over-year comparison.

Word error rates describe the number of times a transcription engine might interpret the wrong word in an uploaded audio file. Generally, the models 3Play Media analyzed made some progress from 2022 to 2023 in reducing the number of word errors they make, but not in all cases.

Another factor that affects AI’s reliability for transcription is its frequency of punctuation errors, which affect readability. In this realm, OpenAI’s model is most accurate, but it is still only 85% reliable.

Open AI offers multiple speech recognition models of varying complexity and power. The “tiny” version only performs English language transcription, whereas its large model is multilingual. The company launched these for the first time in 2022, and 3Play Media only studied them for the 2023 year. AssemblyAI’s speech recognition tool was also more recently released and has no comparable prior-year data. Developers trained its 2023 speech recognition software on 1 million hours of audio—it is also capable of English language transcription.

In a testament to how quickly advancements in the space are moving, Assembly released a successor just this year based on 12 times the training data, and which it advertises as multilingual and “hallucinates” 30% less often than OpenAI’s competing service.

AI hallucination refers to large language models’ tendency to invent misstatements in their output. In a chatbot, this might look like a confidently stated fact that isn’t true. These remaining shortcomings and the current state of AI development make using it for accessibility reasons difficult.

That’s a major drawback for companies that take accessibility and the surrounding laws and requirements seriously, giving them pause before entirely handing the reins to AI for transcribing audio.

panuwat phimpha // Shutterstock

Pure AI transcription still isn’t fully compliant with the ADA

Person speaking into mobile phone; the screen shows text that says, speak now, and has a microphone symbol.

Despite their advancements, AI transcription tools still aren’t on par with human accuracy. People in the production loop often must make substantial edits to comply with legal guidelines for web content accessibility under the Americans with Disabilities Act.

It’s generally accepted that to achieve ADA compliance, websites should follow the Web Content Accessibility Guidelines that outline best practices for auto-generated captions. Having humans manually review automated captions helps ensure that a video’s information is fully and accurately represented, for example.

Even as AI companies advance toward more accurate models, the dream of instantaneous on-demand captions for Americans with disabilities may still be a ways away.

Story editing by Alizah Salario. Copy editing by Kristen Wegrzyn.

This story originally appeared on accessiBe and was produced and

distributed in partnership with Stacker Studio.